If patriotism is the last refuge of the scoundrel, then the placebo effect is the last refuge of alternative medicine.

When backed into a corner with nowhere else to go, and challenged by data which demonstrates their remedies are no more effective than placebo, alternative medicine practitioners will cry ‘but we know placebos are powerful, so that means it works!’

This claim isn’t limited to just purveyors of nonsense medicine and their fans. When skeptics give public talks on homeopathy, even an audience of skeptics will contain some who respond: ‘homeopathy is just a placebo, and we know placebos work, so homeopathy works too.’

But the primary evidence for the ‘powerful’ placebo effect is far weaker than most people realise. In my previous article, I mentioned the 2010 Cochrane review on placebo effects. This examined 202 clinical trials which featured both ‘placebo’ and ‘no treatment’ arms, to examine how the placebo patients fared versus their untreated counterparts.

The review found no evidence that placebos had important clinical effects. Such effects as were found were limited to patient-reported outcomes and were difficult to distinguish from biased reporting.

We know from the field of psychology that patient-reported data can be unreliable. Response Bias, or subject-expectancy effects, can lead patients to report what they think should be happening, rather than what really is happening. Experimental subordination, or Demand Characteristics, can lead patients to report what they think their clinicians want to hear. Recall Bias can mean patients do not accurately report a change in their condition from baseline, because they misremember how they felt at baseline.

This could be uncharitably characterised as ‘everybody lies’, but the reality is likely more subtle and patients may not even be aware they are flexing the truth in their self-reports, or that even subtle flexing can skew the results, especially in smaller studies.

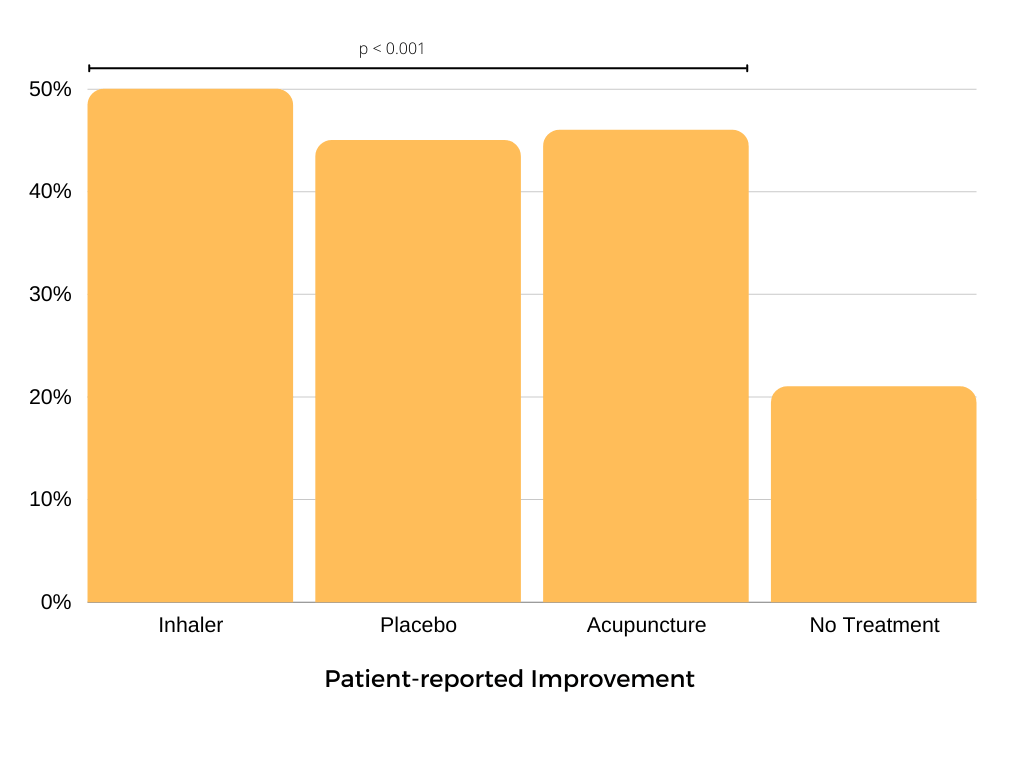

An excellent illustration of the unreliability of patient-reported effects is the paper Active Albuterol or Placebo, Sham Acupuncture, or No Intervention in Asthma, published by the New England Journal of Medicine in 2011.

Forty-six patients with mild-to-moderate asthma were recruited to the study and, over the course of several visits to the clinic, each was given one of four interventions in a random order:

- a standard albuterol (salbutamol) inhaler;

- a fake ‘placebo’ inhaler;

- sham ‘placebo’ acupuncture; or

- no treatment

This was a cross-over study, so patients received twelve interventions in total (each of the four, three times, over twelve sessions), and were asked to score the improvement in their asthma symptoms following the intervention – from 0 (no improvement) to 10 (complete improvement).

The study found that patients using the real salbutamol inhaler reported a 50% improvement from baseline, and patients receiving no treatment arm reported just a 21% improvement. Astonishingly, patients using the fake inhaler reported a massive 45% improvement from baseline, almost as large as salbutamol.

A startling result, I’m sure you’d agree. A fake inhaler, with no medicine in it, improved asthma symptoms almost as much as salbutamol. In fact, there was no statistically significant difference between salbutamol, the fake inhaler, and the sham acupuncture. A powerful placebo indeed, and let’s be honest, this is bad news for salbutamol, which according to these data is no better than placebo.

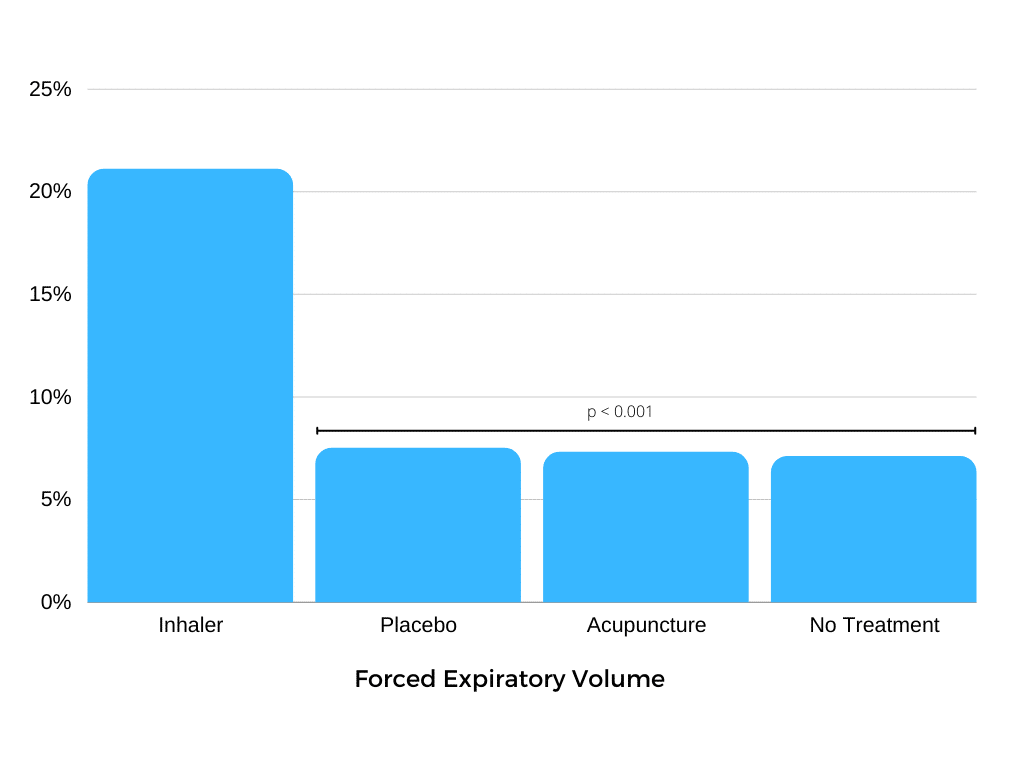

But that’s just the data gathered when the patients were asked how they thought they were doing. The study did not rely solely on that measure but also recorded an objective measure of lung function: Forced Expiratory Volume (FEV) or, how hard you can blow into a tube.

The FEV data tell quite a different story from the patient self-reports. Again, patients using salbutamol had an objectively measurable 20% improvement in lung function, as measured by FEV. Patients getting no treatment had much smaller 7.1% improvement. However, using this objective measure, the placebo inhaler showed only a 7.5% improvement and the sham acupuncture only a 7.3% improvement, neither of which is statistically different to no treatment.

There was no placebo effect, when lung function was objectively measured. The ‘placebo’ interventions had the same effect as no treatment. So why did patients report a large improvement with the fake inhaler, when we know their condition was the same as doing nothing at all?

There was no placebo effect, when lung function was objectively measured. The ‘placebo’ interventions had the same effect as no treatment.

The answer is bias. When you’re taking part in a clinical study, there is a strong social pressure for patients to say what they think they should. They’ve taken the inhaler, they should be feeling better, right? Maybe they even convince themselves they are feeling better. They may misremember how bad they felt when they arrived. So when a doctor asks them to rate how they’re feeling, that’s what they say: “I’m much better doc, thanks”.

They aren’t lying, certainly not maliciously. They genuinely believe that they are or will be doing better, even though we know from their lung function tests that they haven’t improved any more than if we had done nothing. There is no magic here, no demonstration of the amazing power of the mind over the body. Just psychology and statistics.

To the extent that we observe real objective clinical effects, they don’t look to be related to the placebo. To the extent we receive subjective clinical reports of placebo effects, they look very much like biased reporting.

In this case we could dig deeper, and use objective data gathered at the same time to assess each intervention without that subjective bias. But what if no objective measure was available? For outcomes like pain we have only patient reports to go on. We cannot independently and objectively assess how much pain a patient is experiencing.

This isn’t an argument against the use of subjective endpoints in clinical research. Quite the opposite; in some cases we have no alternative. We must depend upon subjective reports when we have no other choice. But we must also do so with recognition of their limitations and their vulnerability to bias.

Building a monument to the placebo on those kinds of unsteady foundations is only going to mislead both patients and clinicians. Taking the subjective data from this study at face value, we could have reasonably concluded that salbutamol doesn’t work, and gone on to deprive asthma patients of an effective intervention, potentially putting lives at risk.