Gather round friends, I want to tell you a true horror story. I can’t promise a twist ending; the monster is still going to turn out to be humans with funny hats on. What I can promise is a direct connection to a recent high-profile act of violence, and an increased anxiety about unregulated internet speech.

Our story begins in the lead up to the 2016 presidential election. Like many very online Millennials, I was posting a lot of political content to my personal facebook wall and getting some rather inflammatory pushback from individuals on the right. It got so bad that I started to get private messages from friends begging me to find an alternative venue. The final straw came when a Native American friend saw a thread where one right wing individual repeatedly referred to Dakota Access Pipeline protesters as “primitives” and “savages”. We’ll call this right-winger Bruce. Bruce, myself, and a few other regular participants decided to take our debates to a private group. A place where people from a truly broad range of perspectives could fully express their most controversial views at a safe distance from the rest of humanity. There was only one possible name for such a place: Monster Island.

As the resident mad scientist, I saw Monster Island as more than just a containment system for some of the worst people I’ve ever experienced: it was a place to experiment on those monsters, myself included. I wanted to study if it was possible for people from radically different worldviews to debate in an environment that had informal guidelines but no officially enforced rules. It was already clear in 2016 that the regulation of online speech was going to be a rolling disaster, producing an endless stream of rage against the moderators, who were invariably cast as biased against the right. Donald Trump himself has claimed that sites like Twitter and Facebook discriminate against right wing speech. Respectable experts have raised concerns about the lack of oversight for algorithmic moderation. Our failures to address this problem proactively seem to promote a desire in some to return to a “golden age” of the internet, before things got so big that moderation of speech on an epic scale became necessary. I had my doubts that less moderation was really the solution to our problems, and Monster Island presented a perfect chance to test my theories.

I would say things went about as well on Monster Island as they did in the Stanford Prison experiment, except we let the disaster run for 4 years instead of just a week. The only guideline we had going in was “what happens on Monster Island stays on Monster Island”, and yes I realize the irony of telling you about that here in a public forum. Think of this article as the letter at the beginning of a Lovecraft story, warning you of a place you should not go, only now you’re too far in to turn away. The forbidden knowledge of Monster Island calls to you. If I disappear in the night, you’ll know why.

Almost immediately we had to add the guideline “no deleting” as individuals started to delete sections of posts to mess with arguments or cover spots where they’d messed up. I call it a guideline because at this point we hadn’t had to actively enforce anything beyond stating the norms. What became clear though was that even the existence of those unenforced guidelines was an affront to some monsters’ sensibilities, and so they set to work testing the fences for weaknesses. They used all the typical troll techniques. Do things that are very close to breaking the guidelines and then force everyone to argue over whether they count. Look for other horrible things they could do that weren’t technically in violation of any guidelines, just to see if it would force us to develop new guidelines in response. It was always a losing battle, because there is a fundamental asymmetry between order and chaos, and chaos always has the advantage in tempo. One troll named Ryan Balch, whose name I have not changed, for reasons that will become apparent, openly declared his intentions to destroy Monster Island, just to prove he could. Several trolls joined his cause.

The result was several years of the purest banality of evil. We ended up needing to add rules against doxxing, blocking admins, explicit threats of physical violence, and taking photos from people’s personal profiles and photoshopping them into sex acts with military dictators. Meanwhile, the quality of discourse deteriorated from semi-functional, where some folks could have actual arguments or at least do a dance that looked vaguely like presenting evidence, to endless spam of the most disturbing memes you’ve thankfully never seen. Here’s a relatively mild example from Ryan Balch that still conveys the type of content we were inundated with:

At some point in the first year we had to start kicking people, though not for material like this, since this post did not violate any Monster Island rules. People really only got kicked when they explicitly broke a stated rule. Even then, it just became a game of whack-a-mole as every troll had multiple sock puppet accounts. Of course, once that happened the reactionary behavior went up exponentially. We tried everything we could to stabilize the situation. Monster Island had always had left-leaning demographics, but we tried hard to invite more right wing folks to the island. That was how we ended up with a horde of Ryan Balchs. Perhaps it was just the result of which groups our right wingers ran in, but we found it nearly impossible to bring in right wing individuals who would even try to engage in debate before dropping some random hate speech. We even formed a leadership group where I represented the left, Bruce represented the right, and our mutual friend – let’s call him Peter – who helped us form the group originally stood for the moderates. Peter was technically a moderate conservative. Having leadership that explicitly leaned right was still insufficient. The right wingers claimed I still held absolute power over the group and they claimed Peter was too soft on the left and so Bruce was effectively outnumbered. Nothing short of giving Ryan Balch a leadership position was going to satisfy them, and thankfully we didn’t go with that option.

By year three it became clear that I’d gotten all the results I was going to get from this experiment, and the toxicity of the island was consuming more and more of my time and life-force, so about a year ago I gave up and swam for shore. Many would say it’s absurd I stuck with it that long, others would call me the worst monster of all for letting the experiment go on as long as I had, and they’re all probably correct. Some of the members seemed to still enjoy the group though, so I passed control over to Peter, set sail, and never looked back. I felt comfortable concluding that unmoderated discourse faces a tragedy of the commons no different than any other unregulated communal resource. There are places online where people who strongly disagree, up to a point, can engage productively. What those groups have in common is substantial rules and heavy moderator enforcement.

I approach the end of my cursed tale, and I promised you a connection to real world violence. If you haven’t placed Ryan Balch yet, give these two articles a quick read.

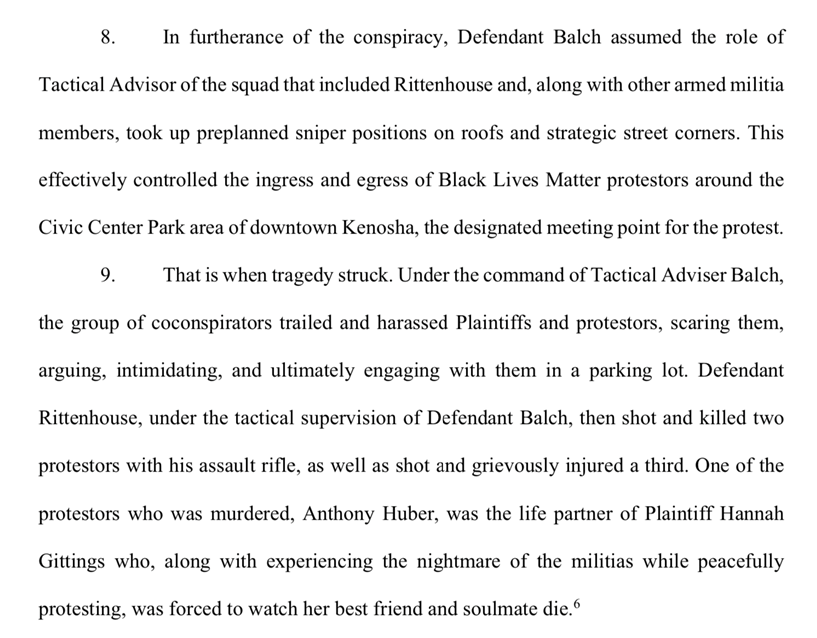

Ryan Balch got his three seconds of fame as the white supremacist who joined Kyle Rittenhouse and his militia buddies, allegedly as their “tactical advisor”, shortly before Kyle was involved in a shooting that claimed the lives of two protesters. Here is the relevant passage from the case filed against Balch, Rittenhouse, several militia groups, and Facebook:

While this lawsuit is unlikely to succeed for various reasons, it drives home how real-life violence is directly connected to online radicalization and organization. I can say pretty confidently that any material the media found when going through Ryan’s internet history pales in comparison to what he was sharing behind closed doors. As far as we know, Kyle Rittenhouse himself did not express white nationalist sentiments the way Ryan Balch frequently did. What Kyle did frequently post about was pro-police sentiments, and America is currently actively wrestling with the overlap between our policing system and white nationalism.

It’s hard to believe that a person from a Facebook group with fewer than 400 members ended up directly connected to vigilante violence against protesters. When we saw the news, Peter and I decided it was finally time, and Monster Island sank back into the internet ocean. I’m happy to see it gone, but I’m haunted by the dark irony that Ryan Balch ultimately made good on all his promises. He got out in the streets, and in doing so he destroyed Monster Island.

Like all highly successful disasters, Monster Island is rich in moral take-aways. Personally, I learned that I do not want to spent my time engaging with people like Ryan Balch, even as some Hail Mary attempt to change their minds or try to understand what mix of nature and nurture got them to that dark place. I do find learning about cult thinking and behavior endlessly fascinating, but I’ve no need to spend my daily life around it. The best I can do is pity, as that seems better than hate or anger.

More generally, I learned that discourse really only works if it’s properly moderated and everyone is committed to the system. That means that someone is going to have to be empowered to make decisions and enforce rules, and we’re going to have to find a way to invest enough trust to keep the discourse from collapsing. That’s a difficult prospect with so many individuals actively seeking to poison the discourse and keep it on life support so they can benefit from the disfunction. I learned from Monster Island that the group of people who just want to watch the world burn is larger than anyone wants to admit and that their goal is the easiest one to achieve.

These days I spend my Facebook hangout time in the Philosopher in Space Facebook group, which requires almost zero real time moderating for a few reasons. First, I moderate who’s allowed in and the few times we’ve had toxic people in the group they haven’t lasted long. Also, we’re not trying to have it out over extremely controversial issues. Most of the group is some version of left/liberal and the most heated debate ever was whether science and magic, and by extension sci-fi and fantasy are really distinct things. I strongly encourage everyone to find their own Philosophers in Space group and avoid anything that feels too much like Monster Island. You may think you can go for just a peek, but rage is addictive and you’ll end up another trapped soul.

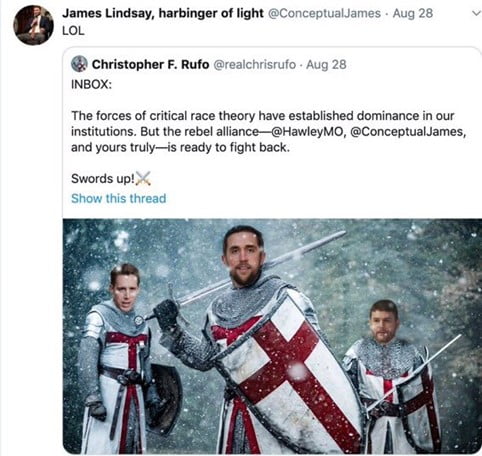

Finally, I learned that the boundary between the online world of trolls and the real world of vigilante violence is much thinner than we want to believe. Balch’s symbolism above is repulsive when it’s made explicit, but there is a whole ecosystem of dog whistle versions of Balch’s symbolism that have infested mainstream right-wing culture. Here is a prime example:

Notice again the use of templar knights and the common theme of protecting western culture from the invasion of globalist forces and their social justice ideologies. Either the individuals involved in creating and sharing this image don’t understand the memetic pool they’re swimming in, or they’re unconcerned about the fact. Neither option seems like good skepticism, as this symbolism and the corresponding ideologies present a serious obstacle for promoting compassionate and constructive discourse on a variety of controversial issues. Skeptics need to be highlighting the role of internet on-ramps in radicalizing young white men, not participating in that process.

We still need to be able to talk to each other, and we need it to go better than Monster Island, or we’re going to follow Monster Island into the ocean.